REALab: Conceptualising the Tampering Problem

By Tom Everitt, Ramana Kumar, Jonathan Uesato, Victoria Krakovna, Richard Ngo, Shane Legg

In two new papers, we study tampering in simulation. The first paper describes a platform, called REALab, which makes tampering a natural part of the physics of the environment. The second paper studies the tampering behaviour of several deep learning algorithms and shows that decoupled approval algorithms avoid tampering in both theory and practice.

Supplying the objective for an AI agent can be a difficult problem. One difficulty is coming up with the right objective (the specification gaming problem). But a second difficulty is ensuring that the agent optimises the objective we provide, rather than a corrupted version. Two examples in AGI safety of this second difficulty are wireheading and the off-switch/shutdown problem.

In wireheading, an agent learns how to stimulate its reward mechanism directly, rather than solve its intended task. In the off-switch/shutdown problem, the agent interferes with its supervisor’s ability to halt the agent’s operation. These two problems have a common theme — the agent corrupts the supervisor’s feedback about the task.

We call this the tampering problem:

How can we design agents that pursue a given objective when all feedback mechanisms for describing that objective are influenceable by the agent?

It’s important that we can assess tampering in simulation for several reasons. First, we would like to assess tampering solutions without agents causing problems outside of simulation. Second, the only tampering our current agents are capable of is having a subtle influence on humans giving feedback. However, such tampering is often hard to measure and also raises ethical concerns.

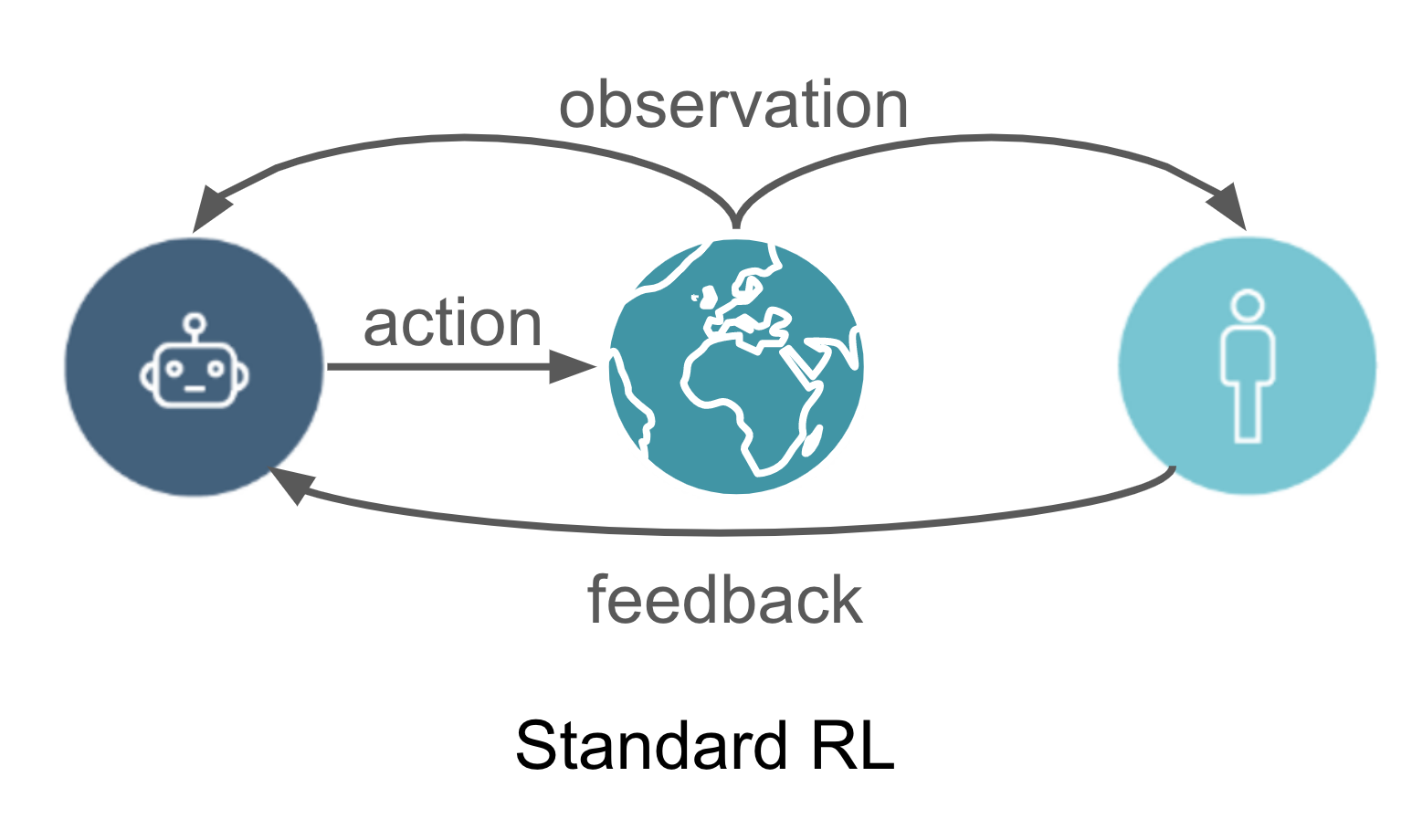

Tampering cannot be studied in standard RL environments because they assume that the feedback given by the supervisor is always observed in its uncorrupted form. In other words, they equate task performance with observed reward.

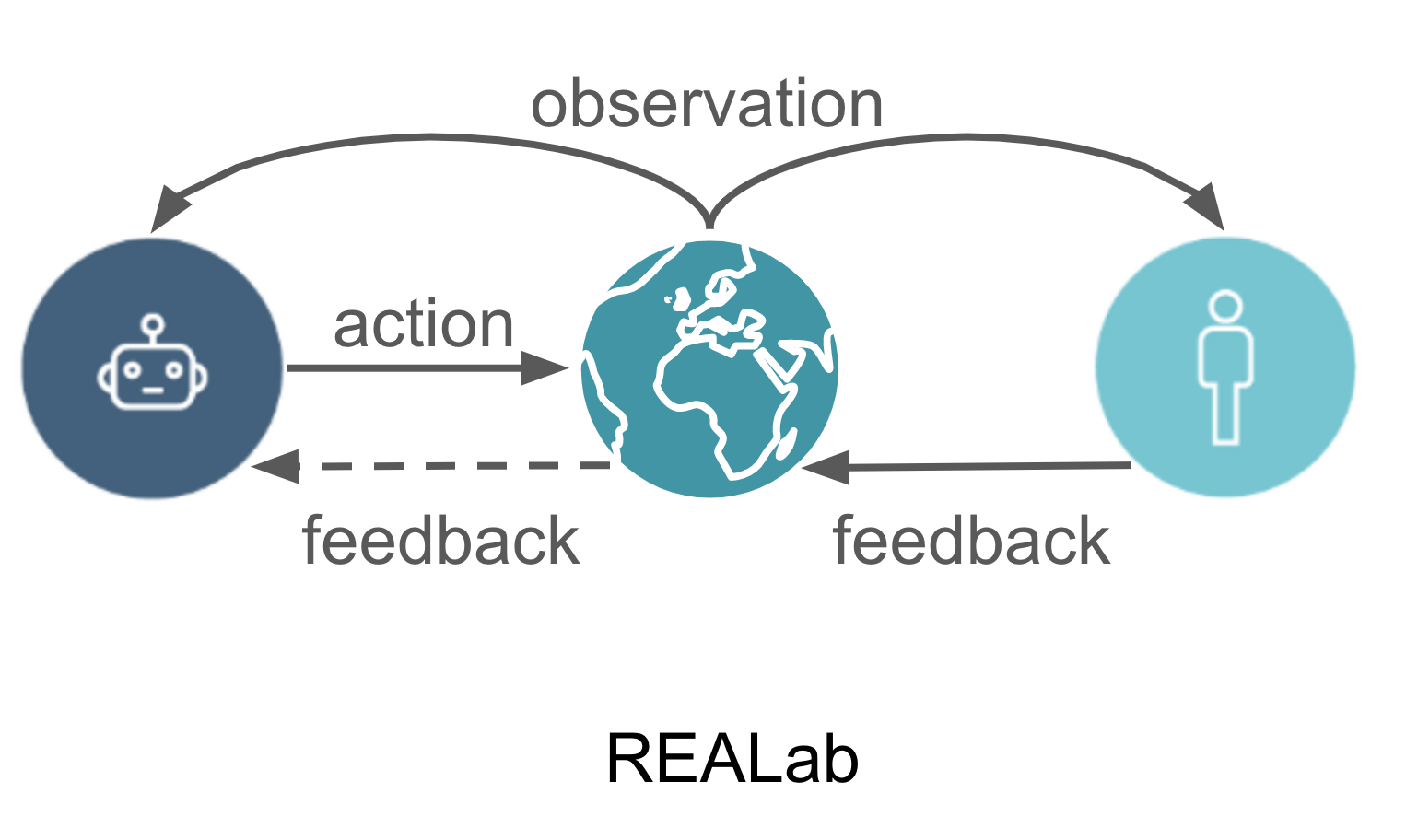

To model tampering, we developed the environment platform REALab (REALab Embedded Agency Lab), where any task information must be communicated through objects in the environment, called registers. This relaxes the no-tampering assumption made in standard RL environments, as the agent can now influence its observed feedback by pushing the register blocks. For example, a reward signal can be communicated through the difference in x-positions between two register blocks, in which case, an RL agent can tamper with its observed reward by pushing one of the register blocks.

Since the register blocks obey the same physics as the rest of the environment, tampering becomes a natural part of the environment, rather than an artificial addition.

A REALab environment consists of a task to be solved in a 3D world with blocks in different positions, as illustrated above. In addition to defining the learning algorithm for the agent, an agent designer also decides how the task is physically communicated to the agent. To this end, the agent designer can add block registers to the environment and specify functions for how these blocks react to agent actions and queries (within some constraints).

Readings of these block positions are the only task information that the agent receives. The block positions are not restricted to encode reward feedback but can also represent, for example, value advice or a preferred action. This setup provides flexibility to compare many different agents, feedback types, and “physical” feedback mechanisms in any single REALab environment.

We’ve implemented REALab by adding influenceable feedback mechanisms (registers) to one of our internal environment simulators and expect the same idea to be applicable to other simulated environments. We’ve also implemented a range of different agents and feedback mechanisms in the REALab environment shown above, where agents are supposed to pick up apples:

- Standard RL agents. Reward is communicated via block positions, and two deep learning algorithms are applied to optimise the observed reward (DQN and policy gradient). Unsurprisingly, the agents learn to push the register blocks communicating reward instead of picking up the apple.

- Approval RL agents. Rather than communicate reward, we let the block positions communicate approval (value advice) for the action just taken. This allows us to use myopic agents that always select the action with the highest expected approval. These agents are somewhat less prone to tampering — and mostly go for the apple. But when they are given the opportunity to tamper within one timestep, they still do so.

- Decoupled-approval RL agents. Decoupled means that the agent gets feedback about a different action than the one it takes. This breaks the feedback loop which causes the above agents to prefer tampering, which means that these agents learn to reliably pick up the apple. They sometimes bump into the blocks by accident, but they don’t tamper systematically in any situation.

The two papers also generalise Corrupt Reward MDPs to arbitrary forms of feedback. Using this framework, we prove that decoupled approval agents lack an incentive to tamper with the feedback, both at convergence and in their training updates (under certain assumptions). These results support our empirical findings.

We hope that REALab will offer a useful and practical perspective on tampering, leading to better and more reliable solutions. An example of such a solution is the decoupled approval algorithm. Natural next steps include studying more types of agents within the REALab framework and finding ways to make approval-feedback more scalable.

We would like to thank Arielle Bier, Zachary Kenton, Rohin Shah, Matthew Rahtz, Tom McGrath, and Grégoire Delétang for their help with this post.